Menu

Getting Started Fishing

Amanda Olsen

April 19, 2024

Double Emergence: Cicadas Taking Over Midwest

Amanda Olsen

April 12, 2024

Sloth Saga Enters New Phase

Cole McDonnell

April 5, 2024

Officials Unite Against State Housing Plan

Amanda Olsen

April 23, 2024

Children’s Service Providers Get Crucial Pay Bump

Anton Media Group Staff

April 23, 2024

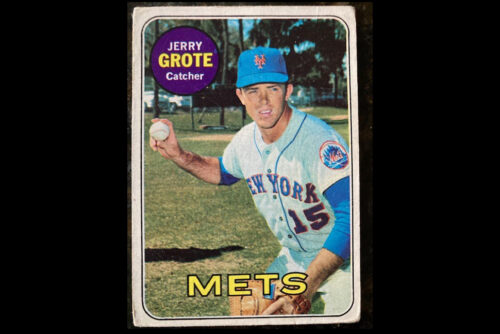

Fond Memories Of Jerry Grote

Amanda Olsen

April 22, 2024

Opponents Of ‘Blakeman’s Militia’ Rally In Mineola

Janet Burns

April 12, 2024

Attacking Our Courts Undermines America

Jerry Kremer

April 22, 2024

Can The MTA Manage $15 Billion Carryover Capital Projects With The Next $51 Billion Or More

Larry Penner

April 15, 2024

Shine A Light For Charity On The INN

Kayla Donnenfeld

April 15, 2024

The Big Move

Marisa Cohen

April 15, 2024

LOCAL EDITIONS

TRENDING

(Photo from NBCUniversal)

Chuck Scarborough:

A Beacon of Journalistic

Longevity

By Christy Hinko • April 22, 2024

THIS WEEK'S

SPECIAL SECTIONS